NEXT

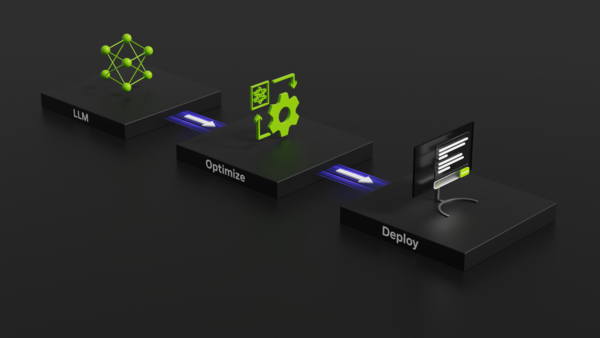

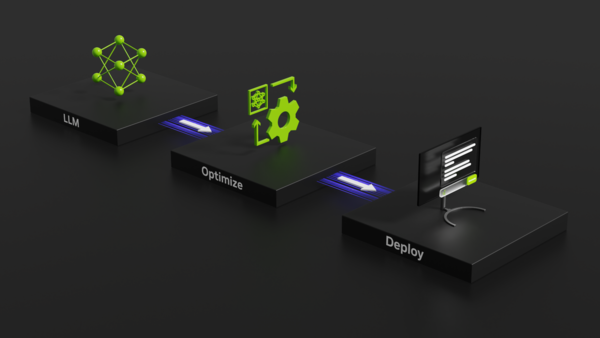

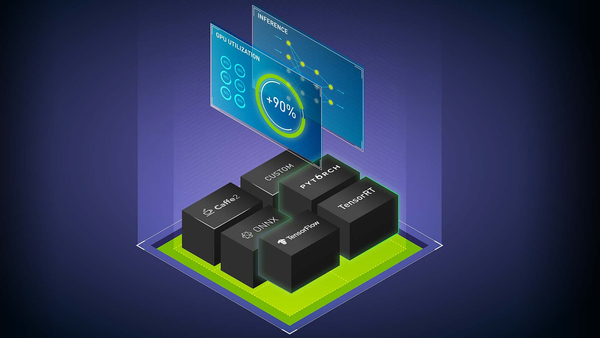

Top 5 Reasons Why Triton is Simplifying Inference

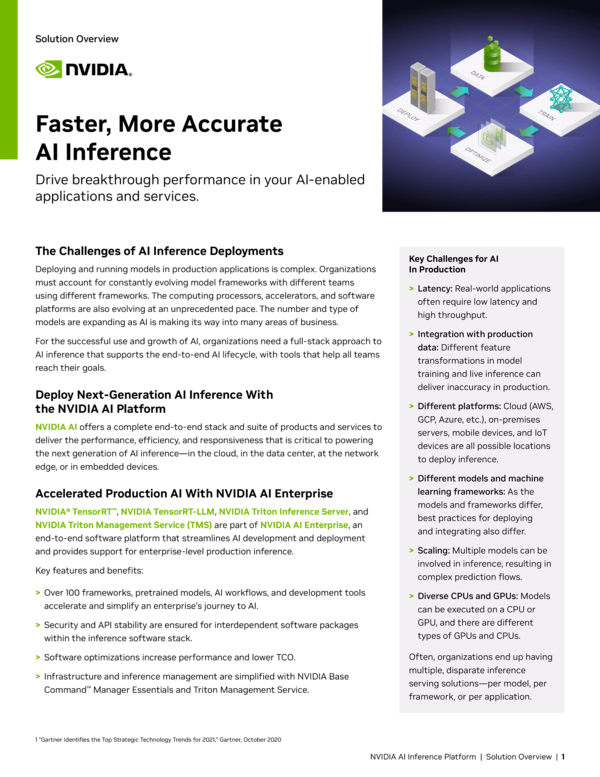

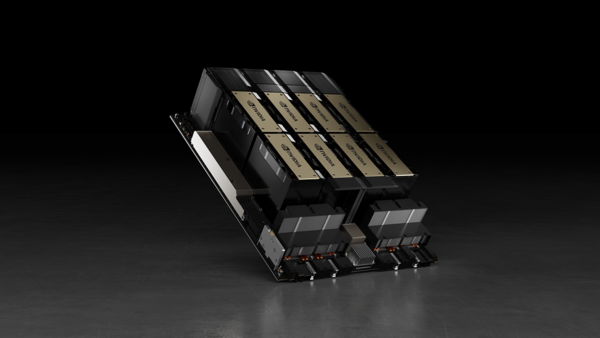

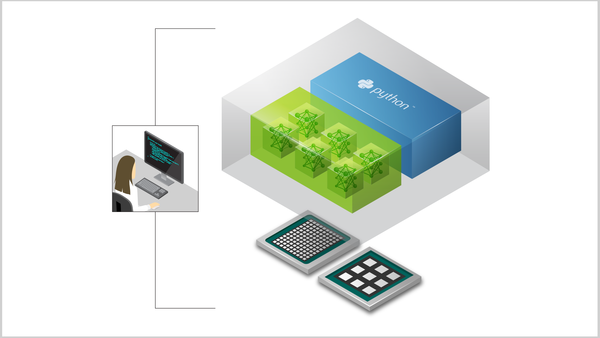

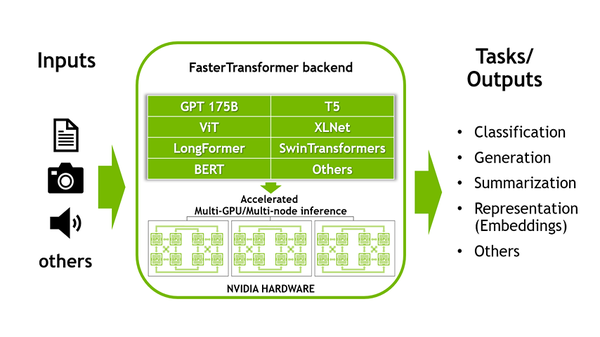

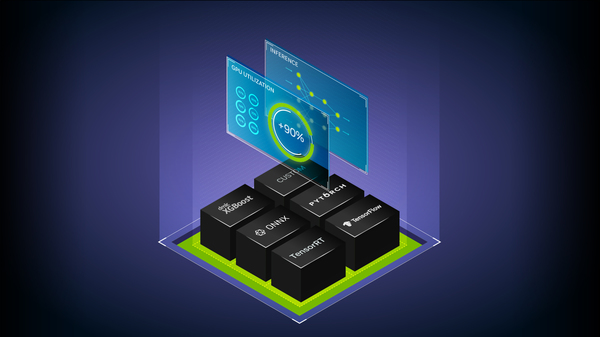

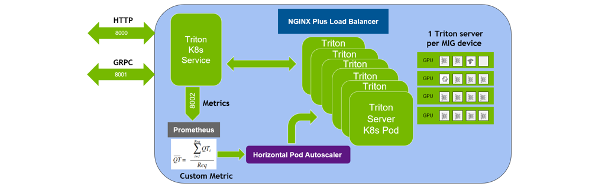

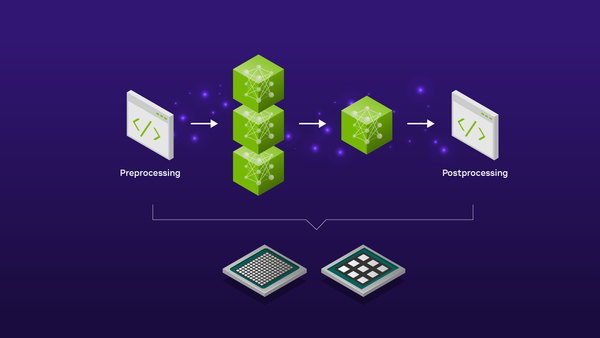

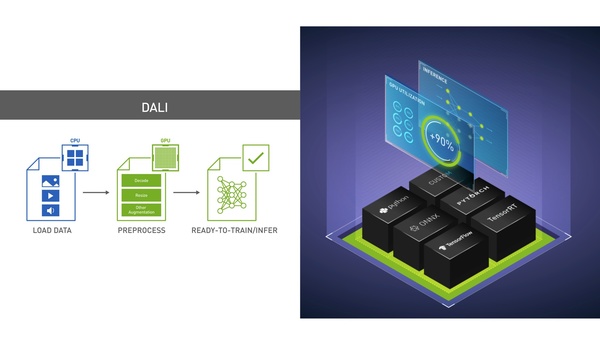

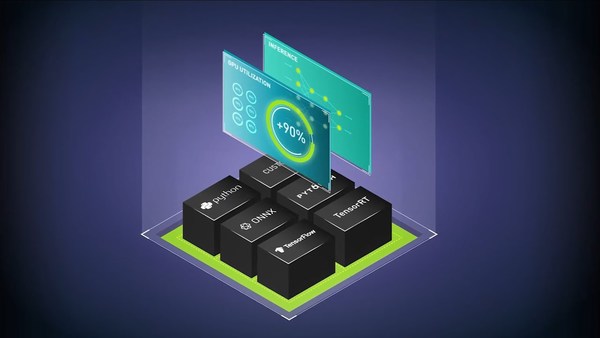

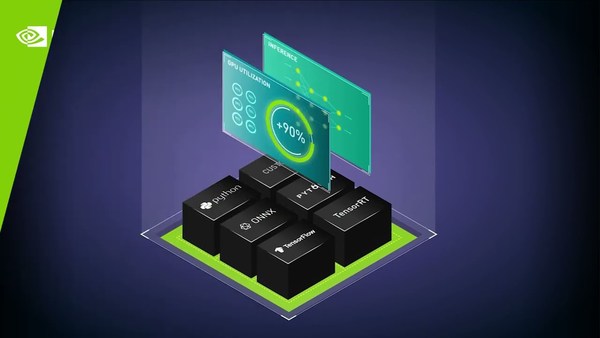

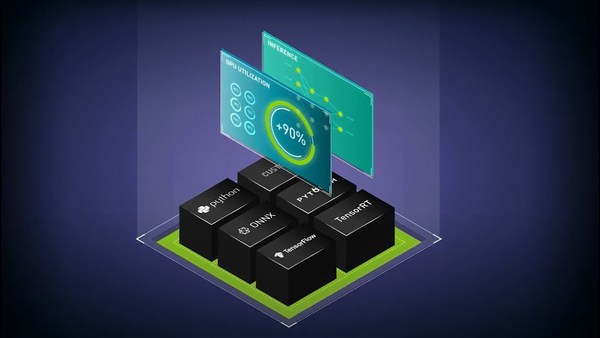

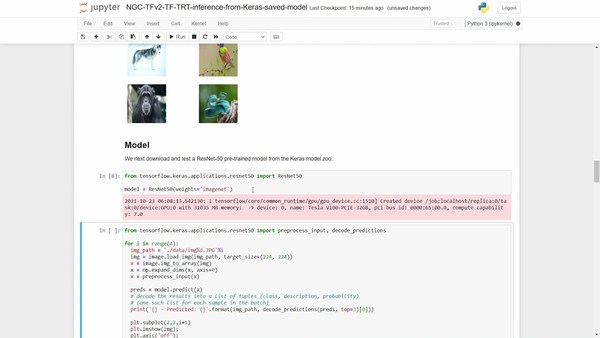

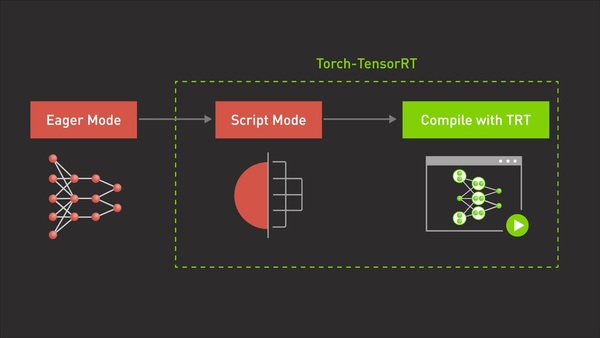

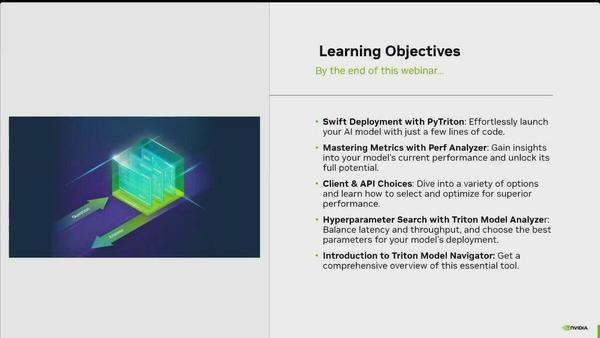

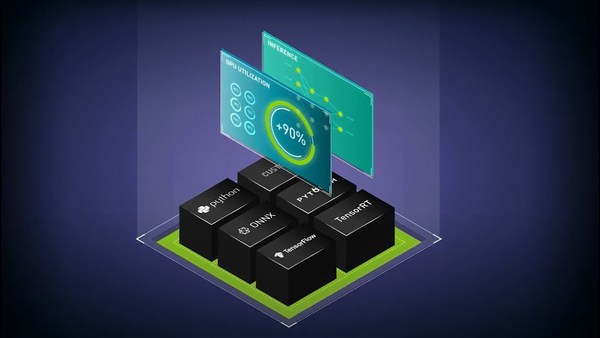

NVIDIA Triton Inference Server simplifies the deployment of #AI models at scale in production. Open-source inference serving software, it lets teams deploy trained AI models from any framework from local storage or cloud platform on any GPU- or CPU-based infrastructure.

Get in Touch

Learn more about purchasing our AI inference software for production deployment.Recommended For You