NEXT

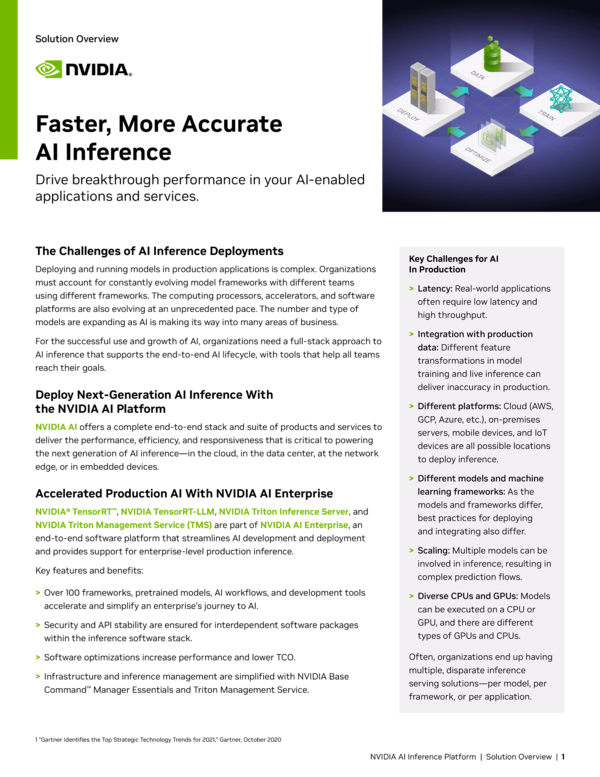

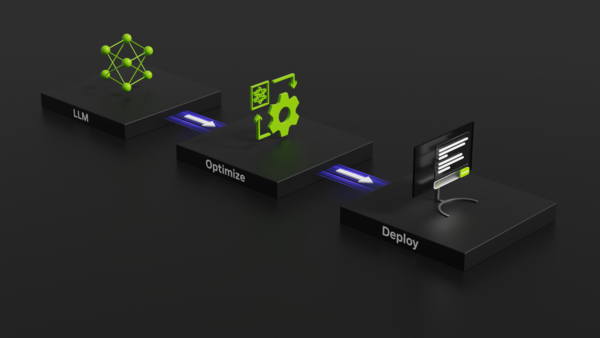

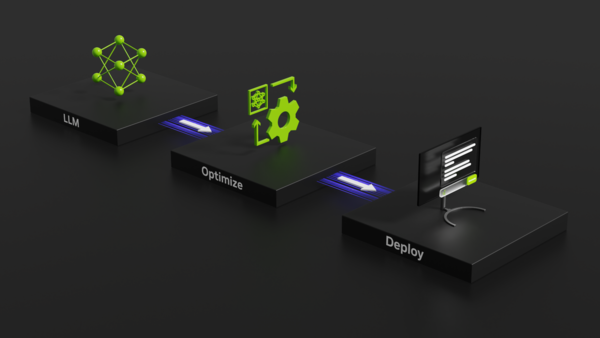

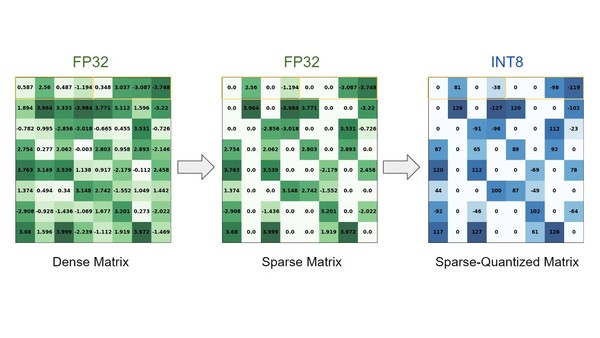

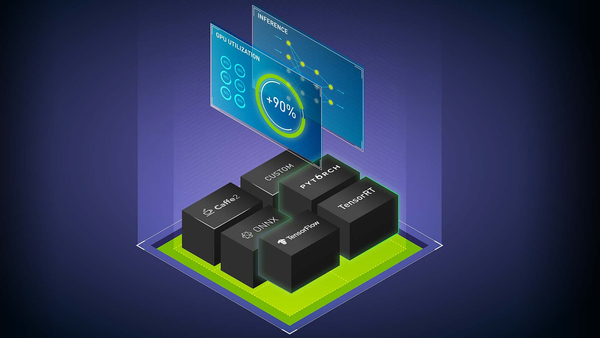

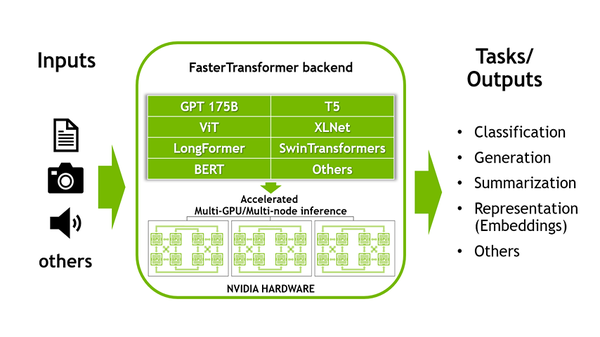

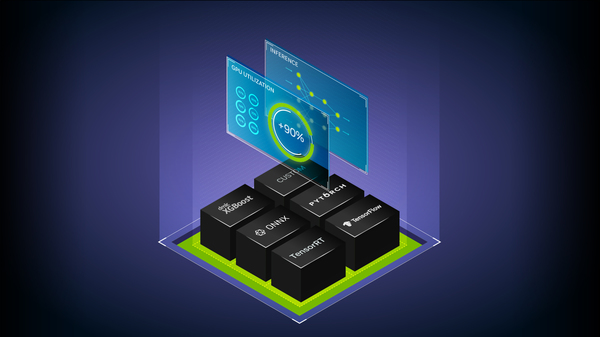

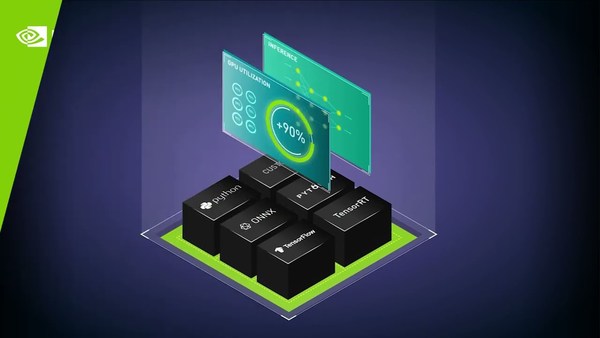

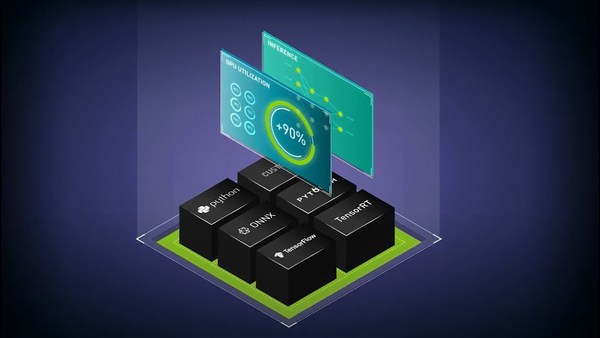

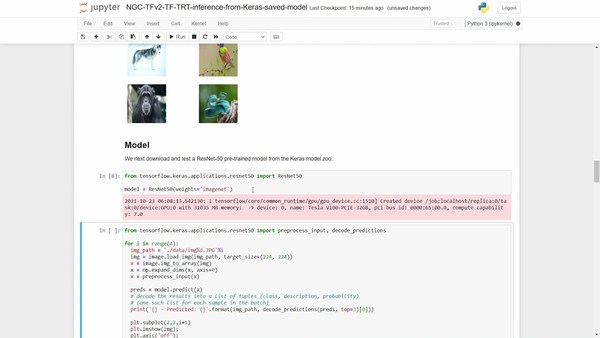

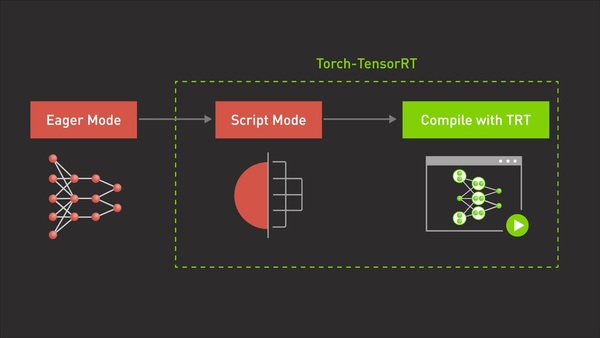

Inference Model Serving for Highest Performance

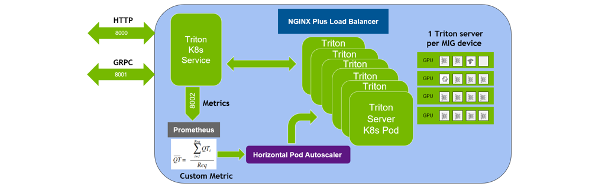

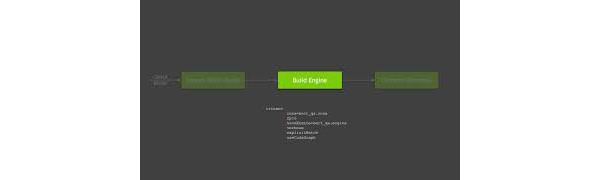

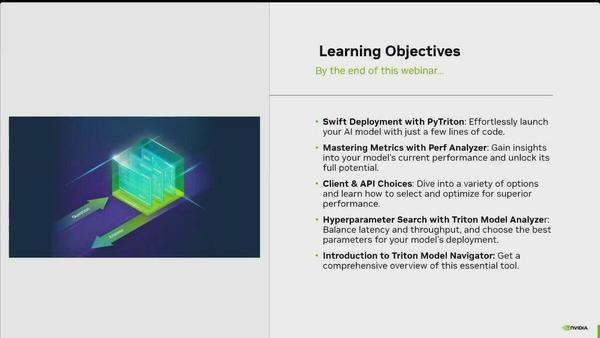

High-performance model inference is critical to business success in many cases. See how eBay created an automated CI/CD pipeline using Tekton and a model management system, then adopted Triton as the primary GPU model serving runtime to enhance high-performance model inference and oversee varied versions of models.

Get in Touch

Learn more about purchasing our AI inference software for production deployment.Recommended For You

Fill This Out to Continue

This content will be available after you complete this form.